Executive Summary

Jarvis is a modular research intelligence system designed to help instructional designers retrieve relevant academic literature without overload, drift, or loss of credibility.

It automates structured weekly searches, flags high-value research, detects conceptual drift, and requires explicit human approval before adoption. The system prioritizes transparency and traceability over speed.

This project demonstrates systems thinking, ethical AI integration, evaluation-driven design, and workflow transparency, all of which are suitable for research-driven environments.

The Problem

Instructional designers working in evidence-based environments face three recurring challenges:

- Literature overload: Searches return hundreds of results with low instructional relevance.

- Concept drift: Automated searches slowly drift away from the original research focus without warning.

- Low trust in AI outputs: Black-box tools hallucinate citations, over-generalize findings, or hide uncertainty.

Jarvis addresses these problems by prioritizing traceability, transparency, and human oversight at every stage of the workflow.

The Solution

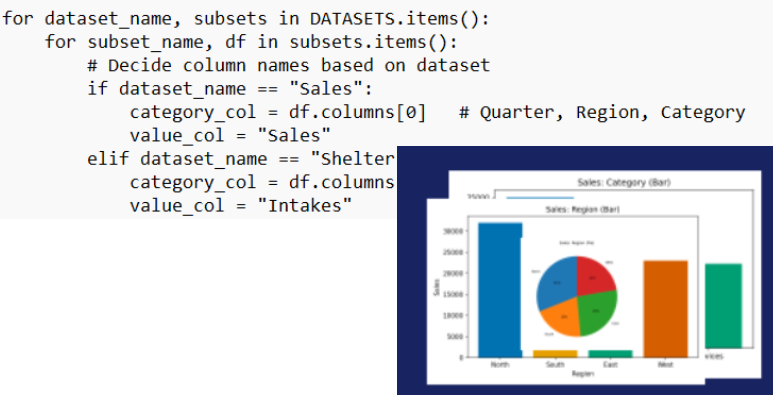

- Modular Queries

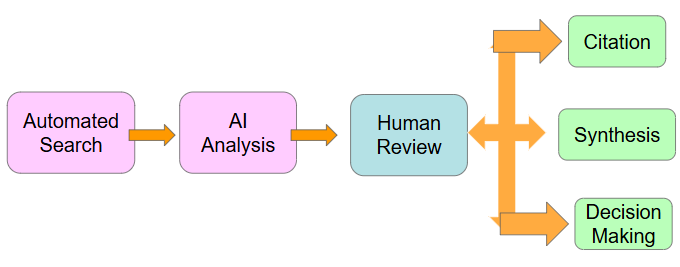

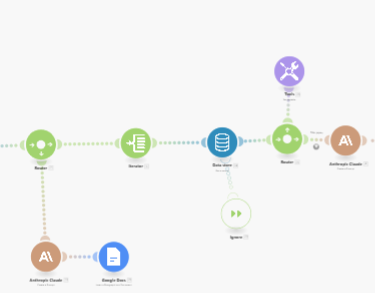

Separate research streams prevent cross-contamination and allow targeted refinement. - Automated Weekly Runs

Each module executes on a schedule, producing structured analyses rather than raw citation lists Every run produces a weekly overview, a high-value relevance flag, and a drift check. - Human Review Gate

All outputs require explicit human approval before citation or downstream use. - Logged Outputs

Logs are automatically generated to support audit, reflection, and iteration.

Evidence of Impact

- Reduced low-value abstract review

- Identified persistent gaps

- Prevented silent scope drift

- Created an auditable workflow

Tools

Make • Claude Sonnet • Semantic Scholar • Structured logging

Skills Demonstrated

- Systems Design

- AI governance

- Evaluation thinking

- Workflow documentation

To explore more of my portfolio, click on one of the modules below.